PathTrack: Fast Trajectory Annotation with Path Supervision

— An efficient annotation method + a large dataset for multiple object tracking

Santiago Manen, Michael Gygli, Dengxin Dai, Luc Van Gool

Abstract

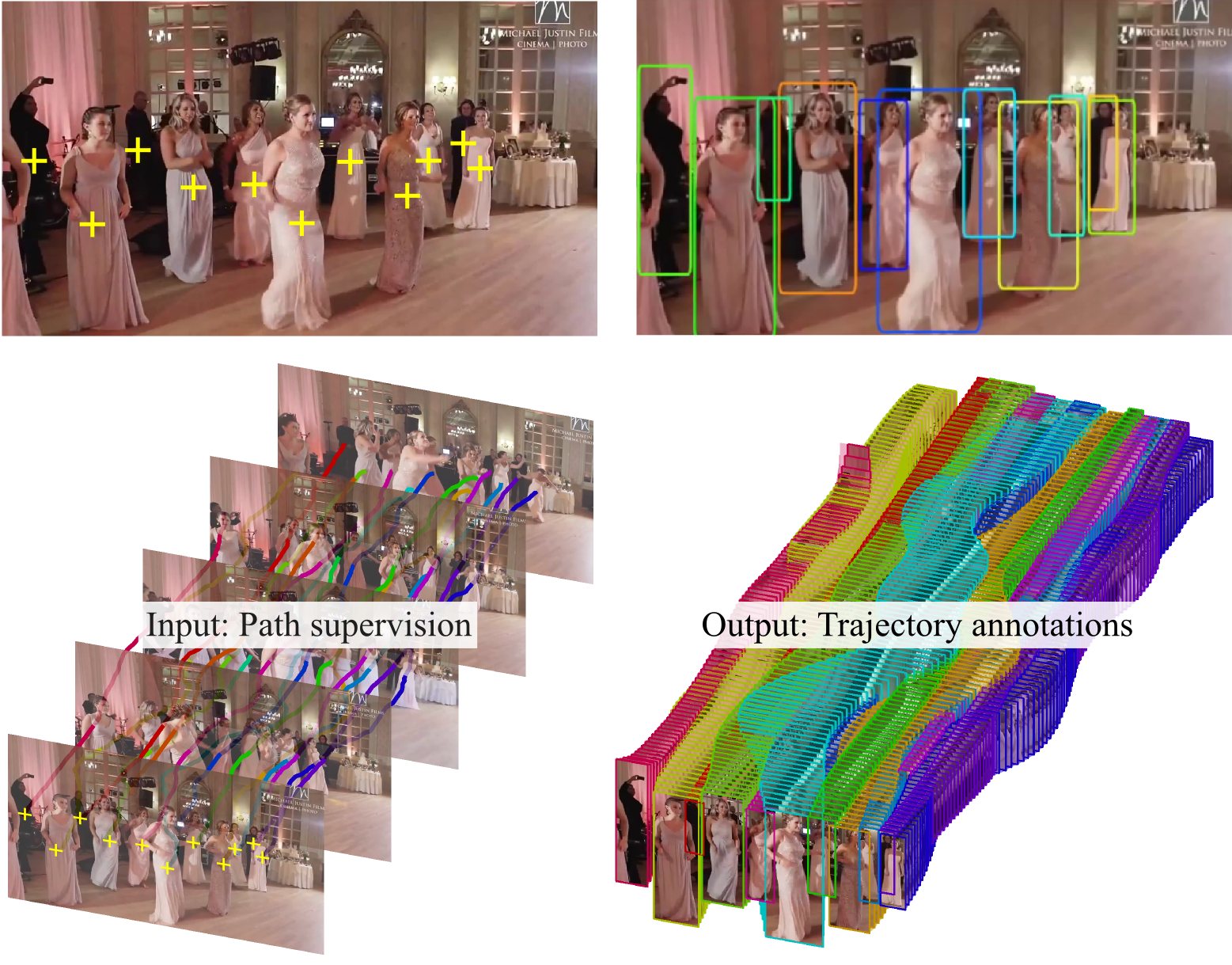

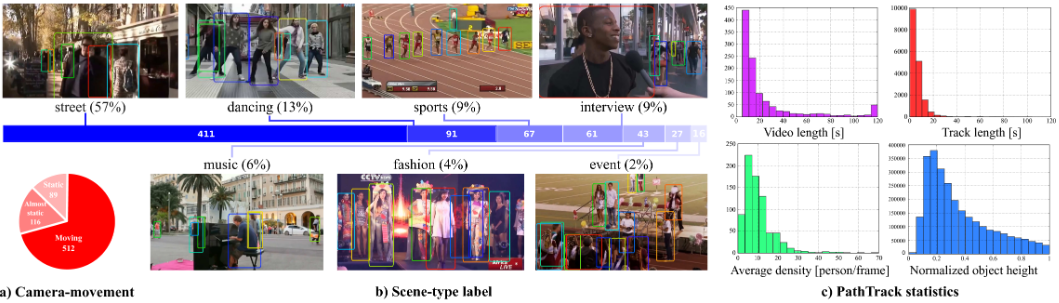

We present an efficient framework to annotate trajectories and use it to produce a MOT dataset of unprecedented size. In our novel path supervision the annotator loosely follows the object with the cursor while watching the video, providing a path annotation for each object in the sequence. Our approach is able to turn such weak annotations into dense box trajectories. Our experiments on existing datasets prove that our framework produces more accurate annotations than the state of the art, in a fraction of the time. We further validate our approach by crowdsourcing the PathTrack dataset, with more than 15,000 person trajectories in 720 sequences.

Tracking approaches can benefit training on such large-scale datasets, as did object recognition. We prove this by re-training an off-the-shelf person matching network, originally trained on the MOT15 dataset, almost halving the misclassification rate. Additionally, training on our data consistently improves tracking results, both on our dataset and on MOT15. On the latter, we improve the top-performing tracker (NOMT) dropping the number of ID Switches by 18% and fragments by 5%.

We collect a large dataset, named PathTrack dataset, for multiple object tracking (MOT) with our approach by crowdsourcing. PathTrack dataset features more than 15,000 person trajectories in 720 video sequences. The dataset can be downloaded via the link given below.

S. Manen, M. Gygli, D. Dai, L. Van Gool. PathTrack: Fast Trajectory Annotation with Path Supervision. In ICCV 2017.

This page has been edited by Dengxin Dai.